Local OpenVINO Model Conversion¶

In this tutorial, you’ll learn how to convert OpenVINO IR models into the format required to run on DepthAI, even on a low-powered Raspberry Pi. I’ll introduce you to the OpenVINO toolset, the Open Model Zoo (where we’ll download the face-detection-retail-0004 model), and show you how to generate the .blob file needed to run model inference on your DepthAI board.

Note

Besides local model conversion (which is more time-consuming), you can also use Blobconverter web app or blobconverter package.

Haven’t heard of OpenVINO or the Open Model Zoo? I’ll start with a quick introduction of why we need these tools.

What is OpenVINO?¶

Under-the-hood, DepthAI uses the Intel technology to perform high-speed model inference. However, you can’t just dump your neural net into the chip and get high-performance for free. That’s where OpenVINO comes in. OpenVINO is a free toolkit that converts a deep learning model into a format that runs on Intel Hardware. Once the model is converted, it’s common to see Frames Per Second (FPS) improve by 25x or more. Are a couple of small steps worth a 25x FPS increase? Often, the answer is yes!

Check the OpenVINO toolkit website for installation instructions.

What is the Open Model Zoo?¶

In machine learning/AI the name for a collection of pre-trained models is called a “model zoo”. The Open Model Zoo is a library of freely-available pre-trained models. The Open Model Zoo also contains scripts for downloading those models into a compile-ready format to run on DepthAI.

DepthAI is able to run many of the object detection models from the Zoo. Several of those models are included in the DepthAI Github repositoy.

Install OpenVINO¶

Note

DepthAI gets support for the new OpenVINO version within a few days of the release, so you should always use the latest OpenVINO version.

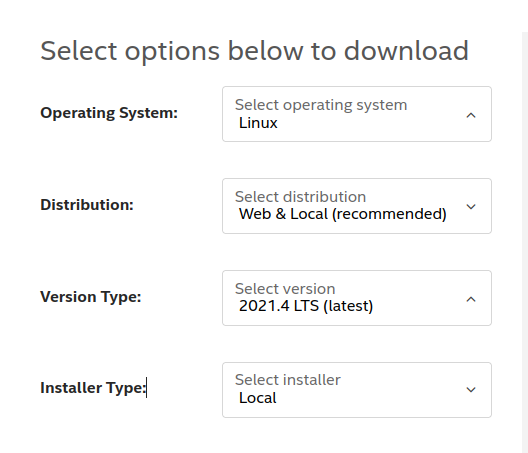

You can download the OpenVINO toolkit installer from their download page, and we will use the latest version - which is 2021.4 at the time of writing.

After downloading and extracting the compressed folder, we can run the installation:

~/Downloads/l_openvino_toolkit_p_2021.3.394$ sudo ./install_GUI.sh

All the components that we need will be installed by default. Our installation path will be ~/intel/openvino_2021 (default location), and we will use this path below.

Download the face-detection-retail-0004 model¶

Now that we have OpenVINO installed, we can use the model downloader

cd ~/intel/openvino_2021/deployment_tools/tools/model_downloader

python3 -mpip install -r requirements.in

python3 downloader.py --name face-detection-retail-0004 --output_dir ~/

This will download model files to ~/intel/. Specifically, the model files we need are

located at:

cd ~/intel/face-detection-retail-0004/FP16

We will move into this folder so we can later compile this model into the required .blob.

You’ll see two files within the directory:

$ ls -lh

total 1.3M

-rw-r--r-- 1 root root 1.2M Jul 28 12:40 face-detection-retail-0004.bin

-rw-r--r-- 1 root root 100K Jul 28 12:40 face-detection-retail-0004.xml

The model is in the OpenVINO Intermediate Representation (IR) format:

face-detection-retail-0004.xml- Describes the network topologyface-detection-retail-0004.bin- Contains the weights and biases binary data.

This means we are ready to compile the model for the MyriadX!

Compile the model¶

The MyriadX chip used on our DepthAI board does not use the IR format files directly. Instead, we need to generate

face-detection-retail-0004.blob using compile_tool tool.

Activate OpenVINO environment¶

In order to use compile_tool tool, we need to activate our OpenVINO environment.

First, let’s find setupvars.sh file

find ~/intel/ -name "setupvars.sh"

/home/root/intel/openvino_2021.4.582/data_processing/dl_streamer/bin/setupvars.sh

/home/root/intel/openvino_2021.4.582/opencv/setupvars.sh

/home/root/intel/openvino_2021.4.582/bin/setupvars.sh

We’re interested in bin/setupvars.sh file, so let’s go ahead and source it to activate the environment:

source /home/root/intel/openvino_2021.4.582/bin/setupvars.sh

[setupvars.sh] OpenVINO environment initialized

If you see [setupvars.sh] OpenVINO environment initialized then your environment should be initialized correctly

Locate compile_tool¶

Let’s find where compile_tool is located. In your terminal, run:

find ~/intel/ -iname compile_tool

You should see the output similar to this

find ~/intel/ -iname compile_tool

/home/root/intel/openvino_2021.4.582/deployment_tools/tools/compile_tool/compile_tool

Save this path as you will need it in the next step, when running compile_tool.

Run compile_tool¶

From the ~/intel/face-detection-retail-0004/FP16 we will now call the compile_tool to compile the model into the face-detection-retail-0004.blob

~/intel/openvino_2021.4.582/deployment_tools/tools/compile_tool/compile_tool -m face-detection-retail-0004.xml -ip U8 -d MYRIAD -VPU_NUMBER_OF_SHAVES 4 -VPU_NUMBER_OF_CMX_SLICES 4

You should see:

Inference Engine:

IE version ......... 2021.4.0

Build ........... 2021.4.0-3839-cd81789d294-releases/2021/4

Network inputs:

data : U8 / NCHW

Network outputs:

detection_out : FP16 / NCHW

[Warning][VPU][Config] Deprecated option was used : VPU_MYRIAD_PLATFORM

Done. LoadNetwork time elapsed: 1760 ms

Where’s the blob file? It’s located in the current folder

/intel/face-detection-retail-0004/FP16$ ls -l

total 2.6M

-rw-rw-r-- 1 root root 1176544 jul 28 19:32 face-detection-retail-0004.bin

-rw-rw-r-- 1 root root 1344256 jul 28 19:51 face-detection-retail-0004.blob

-rw-rw-r-- 1 root root 106171 jul 28 19:32 face-detection-retail-0004.xml

Run and display the model output¶

With neural network .blob in place, we’re ready to roll!

To verify that the model is running correctly, let’s modify a bit the program we’ve created in Hello World tutorial

In particular, let’s change the setBlobPath invocation to load our model. Remember to replace the paths to correct ones that you have!

- detection_nn.setBlobPath(str(blobconverter.from_zoo(name='mobilenet-ssd', shaves=6)))

+ detection_nn.setBlobPath("/path/to/face-detection-retail-0004.blob")

And that’s all!

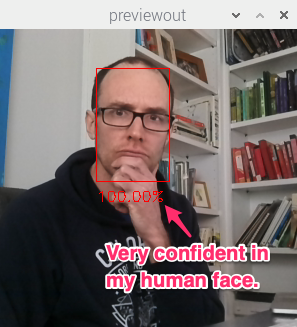

You should see output annotated output similar to:

Reviewing the flow¶

The flow we walked through works for other pre-trained object detection models in the Open Model Zoo:

Download the model:

python3 downloader.py --name face-detection-retail-0004 --output_dir ~/

Create the MyriadX blob file:

./compile_tool -m [INSERT PATH TO MODEL XML FILE] -ip U8 -d MYRIAD -VPU_NUMBER_OF_SHAVES 4 -VPU_NUMBER_OF_CMX_SLICES 4

Here are all supported compile_tool arguments.

Use this model in your script

You’re on your way! You can find the complete code for this tutorial on GitHub.

Note

You should also check out the Use a Pre-trained OpenVINO model tutorial, where this process is significaly simplified.